ENEA LINUX NETWORKING PROFILE

Technology trends show that Linux-based Operating Systems have increased their presence in the area of high-performance networking applications. While there are no standardized ways of programatically accessing hardware offload capabilities, several paradigms co-exist in Linux ecosystem to address this specific need (e.g. USDPAA, DPDK, ODP etc.) Networking Profile in Enea Linux is a framework for anyone attempting to implement high-performance networking applications on various hardware platforms. It aims to bring in place all necessary building blocks which facilitate efficient development of Linux-based solutions on top of network accelerated hardware platforms. As different hardware platforms have distinct data-path acceleration solutions, Networking Profile implementation is very dependent on underlying hardware capabilities.

This document tries to describe the implementation details, changes, additions, kernel configurations and tunings Enea made in order to achieve highly optimized Linux distributions for networking applications.

The following paragraphs focus on Enea Linux Networking Profile on DPAA-based QorIQ platforms, illustrating the implementation and changes on NXP P2041rdb target.

Table of Content

-------------------------------------------

1. Supported Targets

2. Real-Time Performance

------- 2.1 Kernel Modifications

------- 2.2 CPU-Isolation with partrt

------- 2.3 Latency Benchmarks

3. USDPAA Usage

------- 3.1 Packages

------- 3.2 Prepare Target

------- 3.3 Device Trees

------- 3.4 Boot Parameters

------- 3.5 Boot Instructions P2041RDB

------- 3.6 Run Reflector

------- 3.7 Run SRA

------- 3.8 Throughput using USDPAA

1. Supported Targets

Enea Linux Networking Profile has initially been tested on p2041rdb.

2. Improving Real-Time Performance

2.1 Kernel Modifications

When modifying a kernel for high-performance and low-latency applications there are several aspects to take into consideration. In the Enea Linux Real-Time Guide a thorough investigation and explanation of how to optimize Linux for low latency is given. Below is a short description of kernel features added specifically to Enea Linux Networking Profile in order to enhance real-time performance.

Table 2.1 Added kernel features and their properties.

| Change | Reason |

|---|---|

| RCU priority boosting -> cfg/rcu_boost.cfg | Give low priority readers a higher priority to keep them from blocking tasks of higher prority. [RCU] |

| Offload RCU callback Processing -> cfg/rcu_nocb | To reduce OS jitter, enable offloading of RCU callback processing to kernel threads. The rcu_nocbs boot parameter is used to define the set of CPUs to be offloaded. |

| Hotplug CPU -> cfg/hotplug_cpu.cfg | Allows CPUs to be added to/removed from a live kernel. [HOTPLUG] |

2.1.1 Boot Parameters

From [KERN-PARA]:

rcu_nocbs= [KNL]

In kernels built with CONFIG_RCU_NOCB_CPU=y, set

the specified list of CPUs to be no-callback CPUs.

Invocation of these CPUs' RCU callbacks will

be offloaded to "rcuox/N" kthreads created for

that purpose, where "x" is "b" for RCU-bh, "p"

for RCU-preempt, and "s" for RCU-sched, and "N"

is the CPU number. This reduces OS jitter on the

offloaded CPUs, which can be useful for HPC and

real-time workloads. It can also improve energy

efficiency for asymmetric multiprocessors.

isolcpus= [KNL,SMP] Isolate CPUs from the general scheduler.

Format:

<cpu number>,...,<cpu number>

or

<cpu number>-<cpu number>

(must be a positive range in ascending order)

or a mixture

<cpu number>,...,<cpu number>-<cpu number>

This option can be used to specify one or more CPUs

to isolate from the general SMP balancing and scheduling

algorithms. You can move a process onto or off an

"isolated" CPU via the CPU affinity syscalls or cpuset.

<cpu number> begins at 0 and the maximum value is

"number of CPUs in system - 1".

This option is the preferred way to isolate CPUs. The

alternative -- manually setting the CPU mask of all

tasks in the system -- can cause problems and

suboptimal load balancer performance.

2.2 Cpu-isolation with partrt

A tool called partrt is included in the networking profile to divide an SMP Linux system into partitions. A description of the tool can be found in the Linux Real-Time Guide.

2.3 Latency Benchmarks

The cyclictest suite [CYCLIC] is a measurement of system latency used in many projects. As a comparison the measurement was applied to Enea Linux 6.0 Standard, and to Enea Linux Networking profile to investigate the impact of the changes to the system.

Below cyclictest is tested on the two different systems, average and maximum latency are presented in the tables below, first the test on the standard profile and after the results on the networking profile are shown. It is also combined with stress [STRESS] to show system performance under different type of loads.

Command: Cyclictest with no stress

| CPU # | P | I | C_std | Avg_std (us) | Max_std (us) | C_net | Avg_net (us) | Max_net (us) |

|---|---|---|---|---|---|---|---|---|

| 0 | 99 | 1000 | 100000 | 9 | 24 | 100000 | 6 | 11 |

| 1 | 99 | 1500 | 66817 | 9 | 21 | 66812 | 6 | 12 |

| 2 | 99 | 2000 | 50208 | 9 | 23 | 50106 | 6 | 18 |

| 3 | 99 | 2500 | 40083 | 9 | 14 | 40082 | 6 | 10 |

Command: cyclictest with hdd stress:

# stress -d 4 --hdd-bytes 1M &

| CPU # | P | I | C_std | Avg_std (us) | Max_std (us) | C_net | Avg_net (us) | Max_net (us) |

|---|---|---|---|---|---|---|---|---|

| 0 | 99 | 1000 | 100000 | 14 | 223 | 100000 | 11 | 77 |

| 1 | 99 | 1500 | 66820 | 14 | 231 | 66745 | 11 | 100 |

| 2 | 99 | 2000 | 50109 | 14 | 186 | 50055 | 11 | 76 |

| 3 | 99 | 2500 | 40083 | 14 | 176 | 40041 | 11 | 81 |

Command: cyclictest with vm stress:

# stress -m 4 --vm-bytes 4096 &

| CPU # | P | I | C_std | Avg_std (us) | Max_std (us) | C_net | Avg_net (us) | Max_net (us) |

|---|---|---|---|---|---|---|---|---|

| 0 | 99 | 1000 | 100000 | 5 | 20 | 100000 | 3 | 15 |

| 1 | 99 | 1500 | 66818 | 6 | 14 | 66739 | 3 | 7 |

| 2 | 99 | 1500 | 50109 | 6 | 14 | 50103 | 3 | 9 |

| 3 | 99 | 1500 | 40079 | 6 | 14 | 40081 | 3 | 6 |

Command: cyclictest with full stress trial 1:

# stress -c 4 -i 4 -m 4 --vm-bytes 4096 -d 4 --hdd-bytes 4096 &

| CPU # | P | I | C_std | Avg_std (us) | Max_std (us) | C_net | Avg_net (us) | Max_net (us) |

|---|---|---|---|---|---|---|---|---|

| 0 | 99 | 1000 | 99808 | 7 | 93 | 99815 | 6 | 58 |

| 1 | 99 | 1500 | 66733 | 9 | 105 | 66739 | 6 | 54 |

| 2 | 99 | 2000 | 50039 | 9 | 79 | 50039 | 7 | 61 |

| 3 | 99 | 2500 | 40016 | 10 | 83 | 40032 | 6 | 57 |

Command: cyclictest with full stress trial 2:

# stress -c 4 -i 4 -m 4 --vm-bytes 4096 -d 4 --hdd-bytes 1M &

| CPU # | P | I | C_std | Avg_std (us) | Max_std (us) | C_net | Avg_net (us) | Max_net (us) |

|---|---|---|---|---|---|---|---|---|

| 0 | 99 | 1000 | 100000 | 13 | 201 | 100000 | 10 | 87 |

| 1 | 99 | 1500 | 66646 | 11 | 186 | 66685 | 9 | 85 |

| 2 | 99 | 2000 | 49969 | 10 | 195 | 49998 | 10 | 70 |

| 3 | 99 | 2500 | 39960 | 11 | 112 | 39992 | 10 | 90 |

3. USDPAA Usage

The need for predictive and good performance for networking systems is critical. One way of achieving greater performance is for user-space to avoid interactions with the kernel. The kernel is responsible for hardware acceleration allocation and scheduling. By using frameworks such as USDPAA[NXP-SDK] and DPDK[DPDK] control over certain hardware can be given to user-space. USDPAA is specific to NXP/Freescale's QoriQ platforms, for more information please see their guide to USDPAA [NXP-SDK].

In the guides below, an example of how to prepare a p2041rdb target with SRIO and ethernet through USDPAA is given.

3.1 Packages

The networking profile supports and includes all packages necessary for software support of USDPAA. If another image is created the below listed packages are relevant to include (all available in meta-fsl-ppc and meta-freescale) in order to add support for USDPAA and some example applications.

* usdpaa

* usdpaa-apps

* fmc

* fmlib

* flib

* eth-config

3.2 Prepare Target

The SRIO application needs us to boot with a RCW and board configuration that allows usage of the PCI extender port. The below examples are specific to p2041rdb, but similar steps can be taken for other targets where SRIO is not enabled by default.

3.2.1 Reset Control Word (RCW)

The reset control word must configure the serial-deserializer (SERDES) bus for SRIO. This can be done by either a predefined binary/setting, or can be created in Code Warrior [CW].

The RCW used in this example was given in the NXP/Freescale SRA User Guide of [NXP-SDK].

To program the RCW to target from u-boot follow the steps below:

=> tftp 1000000 <path-2-file>/RR_RS_0x02.bin

=> md 0xec000000

ec000000: aa55aa55 010e0100 12600000 00000000 .U.U.....`......

ec000010: 241c0000 00000000 248e40c0 c3c02000 $.......$.@... .

ec000020: de800000 40000000 00000000 00000000 ....@...........

ec000030: 00000000 d0030f07 00000000 00000000 ................

ec000040: 00000000 00000000 091380c0 000009c4 ................

ec000050: 09000010 00000000 091380c0 000009c4 ................

ec000060: 09000014 00000000 091380c0 000009c4 ................

ec000070: 09000018 81d00000 091380c0 000009c4 ................

ec000080: 890b0050 00000002 091380c0 000009c4 ...P............

ec000090: 890b0054 00000002 091380c0 000009c4 ...T............

ec0000a0: 890b0058 00000002 091380c0 000009c4 ...X............

ec0000b0: 890b005c 00000002 091380c0 000009c4 ...\............

ec0000c0: 890b0090 00000002 091380c0 000009c4 ................

ec0000d0: 890b0094 00000002 091380c0 000009c4 ................

ec0000e0: 890b0098 00000002 091380c0 000009c4 ................

ec0000f0: 890b009c 00000002 091380c0 000009c4 ................

=> protect off 0xec000000 +$filesize

Un-Protected 1 sectors

=> erase 0xec000000 +$filesize

. done

Erased 1 sectors

=> cp.b 1000000 0xec000000 $filesize

Copy to Flash... 9done

=> protect on 0xec000000 +$filesize

Protected 1 sectors

=> md 0xec000000

ec000000: aa55aa55 010e0100 12600000 00000000 .U.U.....`......

ec000010: 241c0000 00000000 088040c0 c3c02000 $.........@... .

ec000020: de800000 40000000 00000000 00000000 ....@...........

ec000030: 00000000 d0030f07 00000000 00000000 ................

ec000040: 00000000 00000000 091380c0 000009c4 ................

ec000050: 09000010 00000000 091380c0 000009c4 ................

ec000060: 09000014 00000000 091380c0 000009c4 ................

ec000070: 09000018 81d00000 091380c0 000009c4 ................

ec000080: 890b0050 00000002 091380c0 000009c4 ...P............

ec000090: 890b0054 00000002 091380c0 000009c4 ...T............

ec0000a0: 890b0058 00000002 091380c0 000009c4 ...X............

ec0000b0: 890b005c 00000002 091380c0 000009c4 ...\............

ec0000c0: 890b0090 00000002 091380c0 000009c4 ................

ec0000d0: 890b0094 00000002 091380c0 000009c4 ................

ec0000e0: 890b0098 00000002 091380c0 000009c4 ................

ec0000f0: 890b009c 00000002 091380c0 000009c4 ................

=>

In order to obtain specific hardware settings, some multiplexers need to be set. Descriptions of these can be obtained by typing cpld -h in u-boot, information about the peripherals are also available in the board's respective user guide. For SRIO on the p2041rdb target the following settings are necessary.

cpld_cmd lane_mux 6 0

cpld_cmd lane_mux a 0

cpld_cmd lane_mux c 0

cpld_cmd lane_mux d 0

3.3 Device Trees

USDPAA enabled targets have very specific device trees, this is because instead of handing over hardware to the linux kernel, it is managed by the DPAA framework.

The networking profile includes a custom device-tree

(

Available and tested device-trees for p2041rdb:

- uImage-p2041rdb-usdpaa-enea.dtb EL custom interface, gives one interface to the linux kernel and remaining to DPAA.

3.4 Boot Parameters

USDPAA demands some custom boot arguments. If not given, or if given improperly the USDPAA applications will not be usable. The NXP/Freescale manual covers these arguments, however might be misdirecting since the documentation in several places are, rather than target agnostic, specific instructions that are only applicable to certain targets. If unsure, one can consult the benchmarking chapter of the NXP/Freescale SDK documentation that include more exact steps per tested targets.

For our purposes of testing SRIO and the reflector application we only need the 'usdpaa_mem' boot argument. If the reserved memory is too large, it will cause segmentation faults.

Table 4.1 'usdpaa_mem=?'

| TARGET | usdpaa_mem |

|---|---|

| p2041rdb | =< 64M |

3.5 Boot instructions P2041RDB

Below are instructions on how to boot a p2041rdb board with usdpaa enabled.

3.5.1 Boot over NFS server

tftp 1000000 uImage-p2041rdb.bin

tftp c00000 uImage-p2041rdb-usdpaa-enea.dtb

setenv bootargs root=/dev/nfs

nfsroot=172.21.3.8:/unix/enea_linux_rootfs/<folder-path> rw ip=dhcp

console=ttyS0,115200 memmap=16M$0xf7000000 mem=4080M max_addr=f6ffffff

usdpaa_mem=64M

bootm 1000000 - c00000

3.5.2 RAM Boot

tftp 1000000 uImage-p2041rdb.bin

tftp 2000000 uImage-p2041rdb-usdpaa.dtb

tftp 5000000 enea-image-networking-p2041rdb.ext2.gz.u-boot

setenv bootargs root=/dev/ram rw console=ttyS0,115200 ramdisk_size=10000000

log_buf_len=128K usdpaa_mem=64M

bootm 0x1000000 0x5000000 0x2000000

3.6 Run Reflector

Reflector is a demo application from NXP/Freescale that through ethernet recieves a package and sends it back to with switched source-destination IP addresses switched.

In order to test reflector an ethernet cable must be connected between either two p2041rdb targets or between a work-PC and the p2041rdb target.

Connect two targets by ethernet and do the following steps to test connection with usdpaa:

- Boot Board A with uImage-p2041rdb-uspdaa-enea.dtb

- Boot Board B with uImage-p2041rdb.dtb

- On board A do the following:

# Configure what ethernet ports that should be used by dpaa

$ vi config-p2041rdb.xml

<cfgdata>

<config>

<engine name="fm0">

<port type="MAC" number="2"

policy="hash_ipsec_src_dst_spi_policy1"/>

<port type="MAC" number="3"

policy="hash_ipsec_src_dst_spi_policy2"/>

<port type="MAC" number="4"

policy="hash_ipsec_src_dst_spi_policy3"/>

<port type="MAC" number="5"

policy="hash_ipsec_src_dst_spi_policy4"/>

</engine>

</config>

</cfgdata>

$ fmc -c config-p2041rdb.xml -p /usr/etc/usdpaa_policy_hash_ipv4.xml -a

# start reflector and check the ethernet ports:

$ reflector

reflector> ifconfig

- On board B do the following:

# Configure the network interface to which you connected the ethernet

cable, by choosing an ip- and MAC address.

$ ifconfig -a

$ ifconfig <eth-x> 192.168.0.10 netmask 255.255.255.0 up

# Configure to connect to the hw address

$ arp -s 192.168.0.11 <hw-address> -i <eth-x>

# Ping Board A

$ ping 192.168.0.11

3.7 Run SRA

The user space drivers from NXP/Freescale support usage of SRIO from linux user space. The SRA application is a demo application from NXP/Freescale that can implement writing from one SRIO interface to another, avioding kernel interaction by using DMA (direct memory access) memory management. More information on the drivers can be found in the NXP/freescale SDK [NXP-SDK].

In the test run below two boards are prepared with SRIO interfaces. Both boards are initialized with a memory setting that sets the different SRIO memory spaces to different values. Then data from Board A's Write-prepapration space is written to Board B's Map space.

# start the srio application

$ sra

# setup board B (receiver)

sra> sra -attr port1 win_attr 1 nwrite nread

# set local memory to data predefined in 0x100000

sra> sra -op port1 1 0 0 s 0x100000

# read to view what is written to port 1

sra> sra -op port1 1 0 0 p 0x100000

# setup board A (transmitter)

sra> sra -attr port1 win_attr 1 nwrite nread

# set local memory and then read to confirm

sra> sra -op port1 1 0 0 s 0x100000

sra> sra -op port1 1 0 0 p 0x100000

# write what is in 'write preparing space' to outbound

sra> sra -op port1 1 0 0 w 0x100000

# confirm on board B that 'write preparation space' from board A is written in

# 'map space'

sra> sra -op port1 1 0 0 p 0x100000

Board A Board B

+-------------------+ +-------------------+

| Map space | +------>| Map space |

+-------------------+ | +-------------------+

| Read data space | | | Read data space |

+-------------------+ outbound | +-------------------+

| write preparation |-----------+ | write preparation |

| space | | space |

+-------------------+ +-------------------+

| reserved space | | reserved space |

+-------------------+ +-------------------+

Image 3.1 SRIO memory space

3.8 Throughput using USDPAA

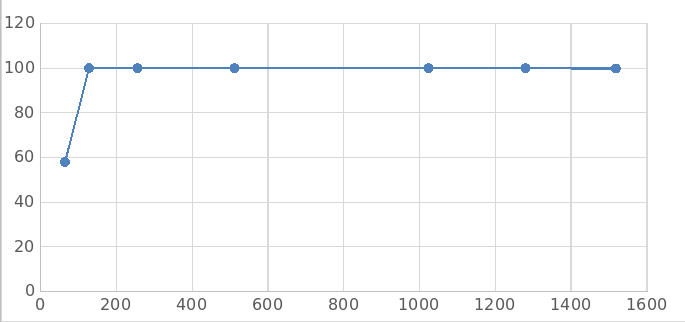

To show some of the power of using the USDPAA framework a test of the throughput over a 10G ethernet link was tested and the results are presented below.

The tool used for performance measuring was Spirent Test Center which is used as a packet generator along with the “Spirent Test Center” application, version 4.33. The test targets are connected to the Spirent Test Center through 10G Ethernet ports(XAUI-RISER card). On Enea Linux 6.0 a the USDPAA reflector was used as a packet forwarding application. The resulting Throughput performance measured with spirent can be seen in image 3.2 below.

Image 3.2 Throughput on p2041rdb over a 10G ethernet port using USDPAA.

x-axis shows the frame size in bytes, and y-axis the aggregated throughput in

megabits per second.

[NXP-SDK] QorIQ SDK 1.9 Documentation - https://freescale.sdlproducts.com/LiveContent/web/pub.xql?c=t&action=home&pub=QorIQ_SDK_1.9&lang=en-US#addHistory=true&filename=GUID-81837065-81AD-449B-8572-E96C3EED636F.xml&docid=GUID-81837065-81AD-449B-8572-E96C3EED636F&inner_id=&tid=&query=&scope=&resource=&toc=false&eventType=lcContent.loadHome

[KERN-PARA] Kernel Parameters, https://www.kernel.org/doc/Documentation/kernel-parameters.txt

[RCU] Paul McKenney, Priority-Boosting RCU Read-Side Critical Section, https://lwn.net/Articles/220677/

[HOTPLUG] https://www.kernel.org/doc/Documentation/cpu-hotplug.txt

[DPDK] http://dpdk.org/

[CYCLIC] https://rt.wiki.kernel.org/index.php/Cyclictest

[STRESS] http://linux.die.net/man/1/stresshttp://linux.die.net/man/1/stress

[CW] http://www.nxp.com/products/software-and-tools/software-development-tools/codewarrior-development-tools:CW_HOME